|

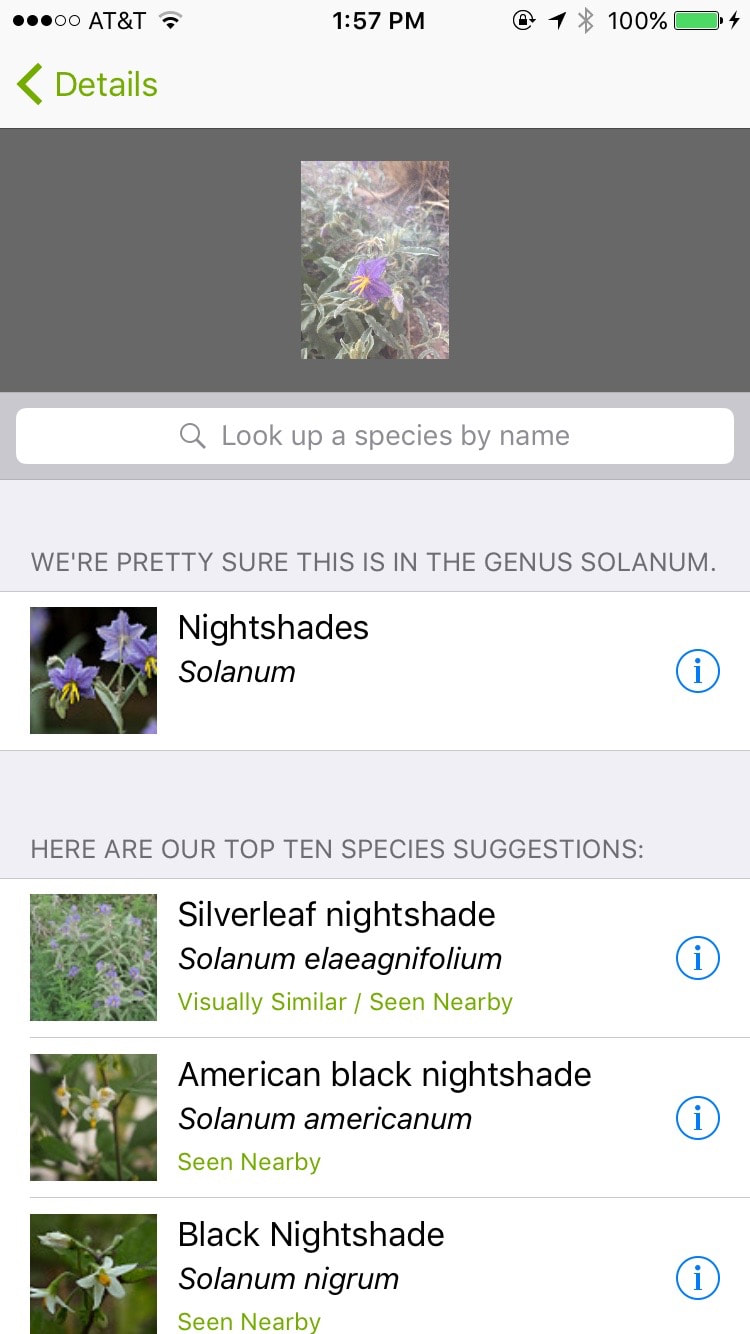

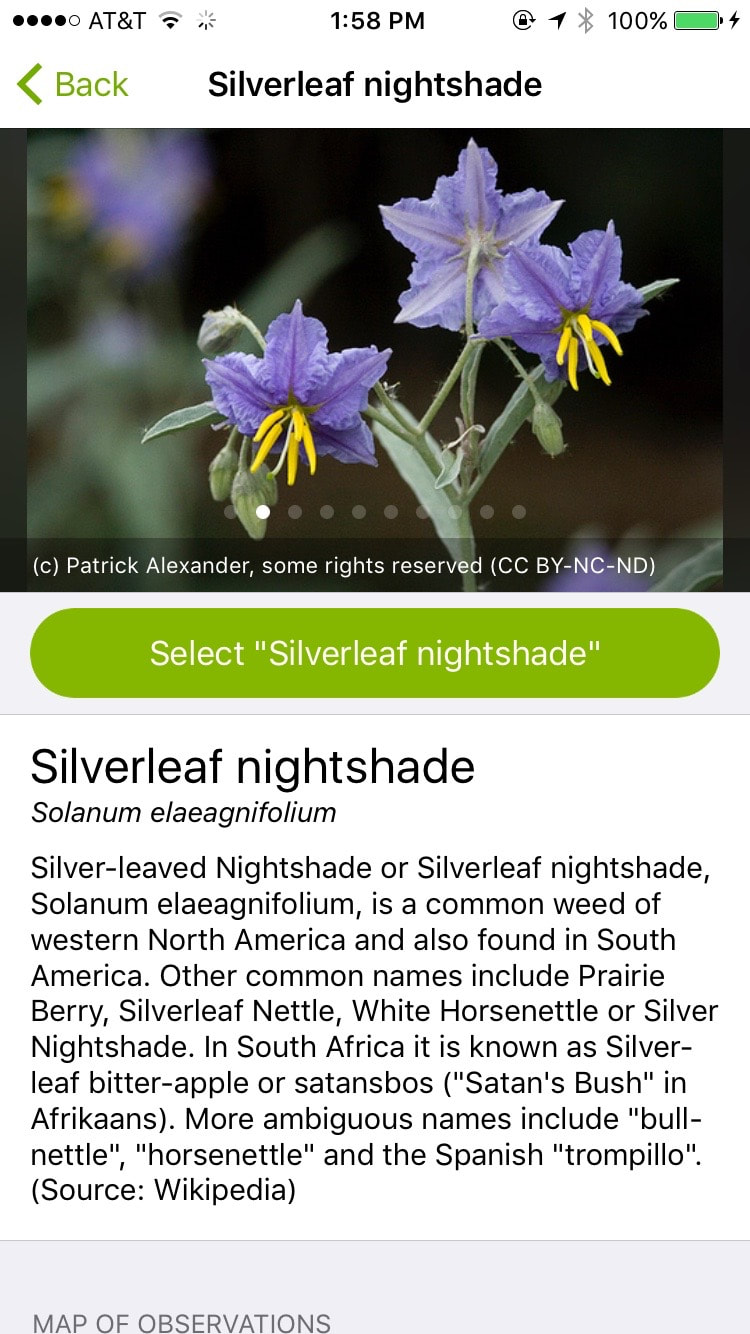

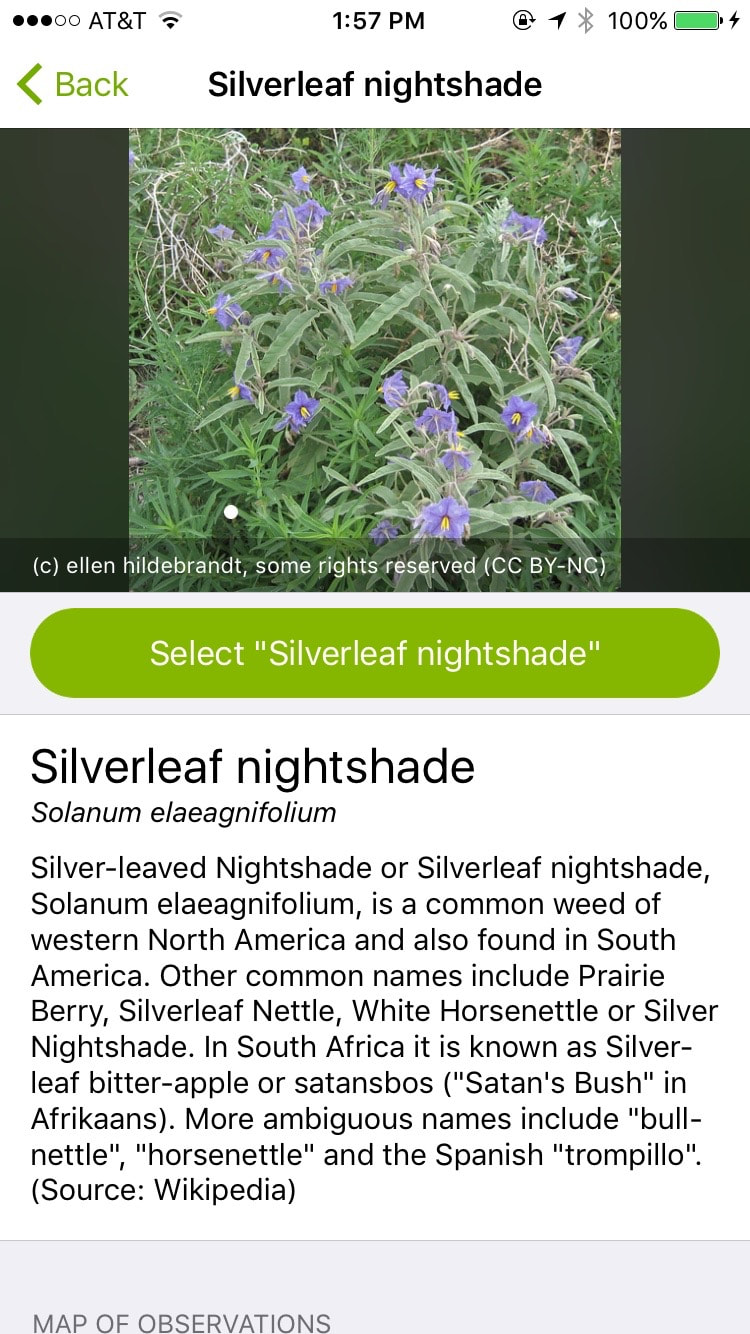

I recently wrote about iNaturalist and a new update includes a powerful new feature I want to share. Updating the app on June 29, I read the version notes and found the understated description above. No fanfare, just “we now give you automatic suggestions”. Which makes sense, because the app is about user photos, and image recognition is the prototypical machine learning/AI application. However, 'universal' image recognition (determining that the subject of a photo is a car, as opposed to a tree) is notoriously much more difficult than specialized applications, such as facial recognition or identifying license plate numbers from images that all contain strings of known characters. So I kept my expectations in check and tried the new feature. It is both shockingly good and absurdly fast. This interesting tech post about the feature indicates that it is using the TensorFlow open source Machine Intelligence framework from the 'Google Brain Team' and running on dedicated NVIDIA hardware. Even if you aren’t interested in sharing or contributing your photos for research, this app seems to be the ultimate, free, comprehensive field guide. Here is how it works: I open the app and choose this photo of a flower from my phone as a new observation. It knows where I took the photo from the geotag. I touch 'View Suggestions'. In a few seconds, it comes back with this: It first tells me that it is pretty sure about the genus, which is pretty cool. It then gives me suggestions on the species and shows me which ones have been seen nearby, based on the geotag. I like the look of the first suggestion, so I touch for more info. This shows me interesting field guide info and also lets me swipe more pictures. I can see close ups of the flowers and even compare the leaves of the plant so that I am sure whether or not this is my species. So that’s pretty cool for flowers, but I’ve seen 'leaf matching' apps before, so let’s see how the same app does with insects and animals. Wow. Not everything I try gets me an exact match in the first few gos, but I learn more, faster than I would searching in a book. You can even see a map of the country with observations, to see where each species has been seen before, to help narrow it down. Up till now I’d been trying photos with fairly representative shapes, with the subject right in the middle. Let’s see how it does on one of the hard problems - a photo with lots of noise and a partial, off-center subject. This level of image recognition may become commonplace soon, but for me, this still feels pretty magical. It adds a level of instant gratification to my hiking and nature photography, as long as my hiking companions don’t get sick of my stopping to photograph ants. Hopefully real-time recognition integrated into a display in my sunglasses will be out in time for Christmas, because, incredibly, it’s now just a matter of design and engineering before we see such products. AuthorShane

1 Comment

Justine

8/9/2017 11:22:58 pm

Shane, I expect those funky sunglasses will be available in NY before they arrive anywhere near Saurat :) You may have to burden yourself with buying two pairs and send one pair over here please. J

Reply

Leave a Reply. |

AuthorsKathryn Tully and Shane Sesta are a married couple, one American and one Brit, who are spending a year traveling across America and writing about their discoveries. Sonny is their rescue cat and fried chicken aficionado. Archives

February 2018

|

RSS Feed

RSS Feed